AWS Certified Developer Associate: Difference between revisions

(→More) |

|||

| (52 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

= More= | |||

==Cloud Trail== | |||

AWS CloudTrail is a service that enables governance, compliance, operational auditing, and risk auditing of your AWS account. With CloudTrail, you can log, continuously monitor, and retain account activity related to actions across your AWS infrastructure. | |||

CloudTrail provides an event history of your AWS account activity, including actions taken through the AWS Management Console, AWS SDKs, command-line tools, and other AWS services. | |||

==API Gateway== | |||

Amazon API Gateway is a fully managed service that makes it easy for developers to publish, maintain, monitor, and secure APIs at any scale. Using API Gateway, you can create an API that acts as a “front door” for applications to access data, business logic, or functionality from your back-end services, such as EC2 or Lambda functions. | |||

https://aws.amazon.com/api-gateway/ | |||

=More informations= | =More informations= | ||

* Exam Guide: [[file:Aws_exam_guide.pdf]] | * Exam Guide: [[file:Aws_exam_guide.pdf]] | ||

* Course: https://www.udemy.com/course/aws-certified-developer-associate-dva-c01 | * Course: https://www.udemy.com/course/aws-certified-developer-associate-dva-c01 | ||

* More informations about this AWs Certified Developer Associate Certification: https://aws.amazon.com/fr/certification/certified-developer-associate/ | * More informations about this AWs Certified Developer Associate Certification: https://aws.amazon.com/fr/certification/certified-developer-associate/ | ||

=Amazon Web Services= | =Amazon Web Services= | ||

==IAM Federation== | ==IAM Federation== | ||

| Line 358: | Line 367: | ||

Contains S3 Buckets | Contains S3 Buckets | ||

By default buckets are closed. We need to add a policy to open it | By default buckets are closed. We need to add a policy to open it | ||

* Defined to region level | |||

* Data Storage | * Data Storage | ||

* Media Distribution | * Media Distribution | ||

* Backup destination | * Backup destination | ||

* Bucket names are unique through AWS | |||

==Objects== | |||

* Store Objects : '''by key = Entire Full Path''' | |||

** '''There is no concept as directories, only very very long key names''' | |||

* Object values = file content max = 5TB | |||

'''If it is need to upload a file of more 5GB, must use "multi-part upload"''' | |||

* '''Best Practice'''Version id if versioning is enabled | |||

==Creation== | ==Creation== | ||

# DNS Compliant Name | # DNS Compliant Name | ||

| Line 369: | Line 386: | ||

## ... | ## ... | ||

==Encryption== | ==Encryption== | ||

'''Important to know in the exam''': wich method of encryption is for what situation | |||

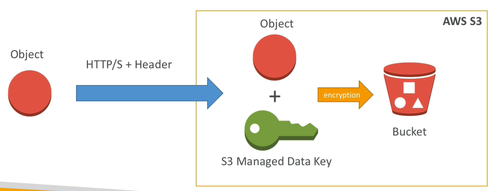

===SSE-S3=== | |||

* Encrypts s3 objects using keys handled & managed by '''AWS''' | |||

[[ | * Encrypted server side | ||

* AWS-256 type | |||

* Must set header '''"x-amz-server-side-encryption":"AWS256"''' | |||

[[File:EncryptionSSE-S3.png|500px]] | |||

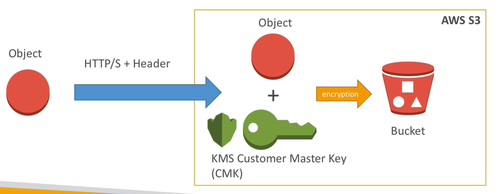

===SSE-KMS=== | |||

* Encrypts s3 objects using keys handled & managed by '''KMS''' | |||

* Encrypted server side | |||

* Leverage AWS Key management service to manage encryption key | |||

* + audit fail | |||

* Must set header '''"x-amz-server-side-encryption":"aws:kms"''' | |||

[[File:EncryptionSSE-KMS.png|500px]] | |||

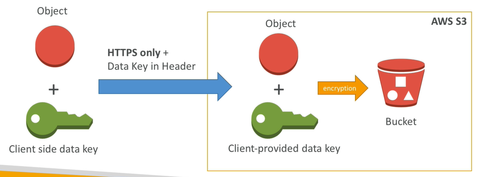

===SSE-C=== | |||

* When you want to manage your own encryption keys outside of AWS | |||

* Encrypted server side | |||

* '''HTTPS must be used''' | |||

* Encryption key must be provided in HTTP Headers for every HTTP request made | |||

[[File:EncryptionSSE-C.png|500px]] | |||

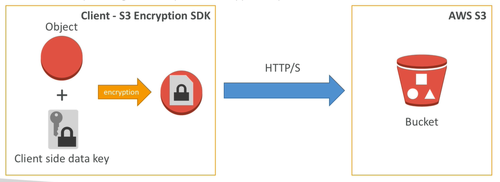

===Client Side Encryption=== | |||

* CLient library such as Amazon S3 Encryption Client | |||

* '''Clients must encrypt data''' themselves before sending to S3 | |||

* '''Clients must decrypt data''' thenseleves when retrieving from s3 | |||

* Customer fully manages the keys and encryption cycle | |||

[[File:EncryptionClientSideEncryption.png|500px]] | |||

===Encryption in transil SSL=== | |||

AWS Exposes: | |||

* HTTP Endpoint: non encrypted | |||

* HTTPS Endpoint: encryption in flight | |||

<span style="color:green">'''Encryption Flight is also called SSL/TLS in the exam !'''</span> | |||

==Versioning== | ==Versioning== | ||

* Can specify max duration for files versions | * Can specify max duration for files versions | ||

| Line 407: | Line 457: | ||

* Two factor authentication supported | * Two factor authentication supported | ||

* custom logins and registration | * custom logins and registration | ||

=SES: Simple Email Service= | =SES: Simple Email Service= | ||

* Verification Email | * Verification Email | ||

| Line 556: | Line 607: | ||

* Separate Public from Private trafic | * Separate Public from Private trafic | ||

* can be internal or external | * can be internal or external | ||

===Stickiness=== | |||

Allow users to stay connected between the differents calls over HTTP Load balancing them on different EC2 instances | |||

==CLB: Classic Load Balancer== | ==CLB: Classic Load Balancer== | ||

'''Deprecated''' | '''Deprecated''' | ||

| Line 635: | Line 687: | ||

* Cannot be resized | * Cannot be resized | ||

* Backups must be operated by the user | * Backups must be operated by the user | ||

=Route 53= | |||

* Public / privates domains | |||

* Load balancing through DNS | |||

* Health CHecks | |||

* Routing policy | |||

* CNAME = performance reasons | |||

==Domain== | |||

==Hosted Zones== | |||

By default recordset: | |||

* NS | |||

* SOA | |||

Create: | |||

* A record to Load Balancer | |||

* CNAME for subdomains | |||

=RDS: Relational Database Service= | |||

Postegres, Oracle, Mysql, MariaDB, Microsoft SQL Server, Aurora | |||

* ASYNC | |||

* Cross AZ or Cross Region | |||

* Up to 5 | |||

* Usually deployed within a private Subnet | |||

* RDS Security works by leveraging security groups like EC2 Instances | |||

* Iam = who can MANAGE RDS | |||

* Username / Password can be used or IAM user | |||

* Default port: 3306 | |||

* DB Instance identifier is unique accross regions | |||

==On EC2== | |||

* Continuous Backups | |||

* OS Patching Level | |||

* Replicas | |||

* Multi AZ for DR: Disaster Recovery | |||

* Maintenance Windows | |||

* Scaling Capability | |||

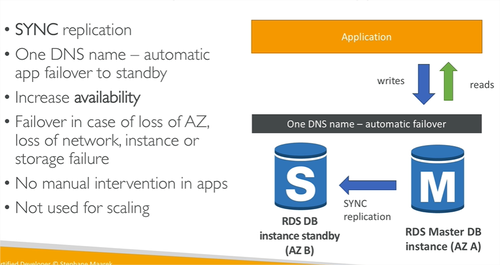

==Multi AZ== | |||

Used For disaster Recovery | |||

[[File:RDS-Sync.png|500px]] | |||

==Backups== | |||

* Automatically Enabled | |||

===Automated Backups=== | |||

* Daily Snapshot | |||

* Capture transaction logs in Real Time | |||

* Respore point to ANY TUME | |||

* 7 DAYS RETENTION | |||

===Snapshots=== | |||

* Manually Triggered | |||

* Retention of backup for ALAYW | |||

==Encryption== | |||

Difference between enforcing SSL and Connecting SSL: Connecting using SSL means SSL is necessarly enforced. | |||

* KMS - AWS-256 | |||

* SSL in flight. | |||

* '''To Enforce SSL''': | |||

** PostgreSQL: | |||

<source lang="shell">rds.force_ssl=1</source> | |||

** MySQL: | |||

<source lang="MySQL">GRANT USAGE ON *.* TO 'mysqluser'@'%'REQUIRE SSL;</source> | |||

=Aurora= | |||

A proprietary technology from AWS - not Open Source | |||

* Compatible with Postegres and MySQL drivers | |||

* '''Cloud optimized: 5 times performance on MySQL or 3 times performance on Postegres''' | |||

* Store grows by 10gb up to 64 TB | |||

* can have 15 replicas | |||

* faster for replication - sub 10ms replica lag | |||

* High Avaibility Native, failover is instantaneous | |||

* costs 20% more than RDS | |||

* '''is not free-tier compatible''' | |||

=AWS ElastiCache= | |||

* To get managed Redis or Memcached | |||

* Caches are in-memory databases with really high performance, low latency | |||

* Read intensive workloads | |||

* Helps make your application stateless | |||

* Write scaling using sharding | |||

* Multi AZ with failover | |||

* AWS takes care of: Maintenance, patching, optimizations, setup, configuration, monitoring, failure recovery, backups | |||

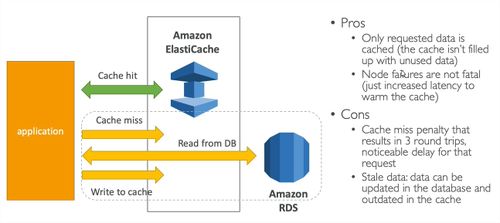

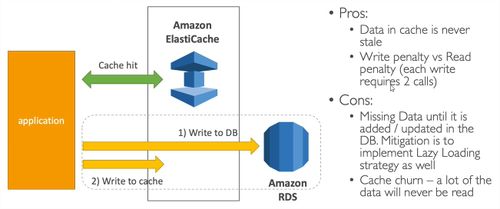

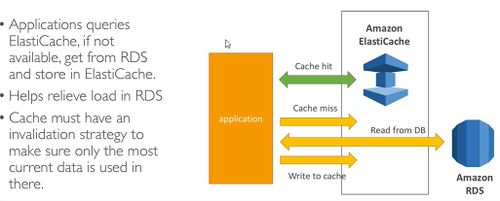

==ElastiCache Patterns== | |||

===Lazy Loading=== | |||

[[File:ElastiCacheLazyLoading.jpg|500px]] | |||

===Write Through=== | |||

[[File:ElastiCacheWriteThrough.jpg|500px]] | |||

==Architecture Patterns== | |||

===DB Cache=== | |||

[[File:ElastiCacheDbCache.jpg|500px]] | |||

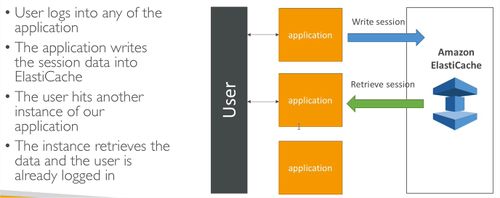

===User Session Store=== | |||

* Number 1: releaf load off database | |||

* Number 2: To share some states: user session store into a common ground such as all the applications can be stateless and retrieve and write theses sessions in real time | |||

[[File:ElastiCacheUserSessionStore.jpg|500px]] | |||

==Redis== | |||

* Memory Key-Value Store | |||

* Super low latency | |||

* Persistence: can survive reboots by default | |||

* '''For: user sessions, leaderboard for gaming ==> there is a sorting capability, distributed states, reliev pressure of RDS Databases, pub/sub for messaging''' | |||

* Mutli-AZ with auto failover for disaster recovery | |||

* supports for read replicas | |||

* Cluster mode enabled => more persistence and more orbustness | |||

==MemCached== | |||

* In-Memory Object Store | |||

* doesn't survive reboots | |||

* QUick retrieval of objects from memory | |||

* Cache often access objects | |||

* Redis is largely popular | |||

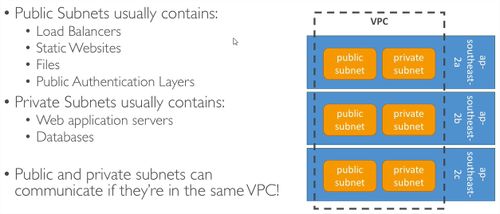

=AWS VPC: Virtual Private Cloud= | |||

* '''VPC are Per Account Per Region''' | |||

* Each VPC Contains Subnets | |||

* It is common to have: | |||

** a public subnet | |||

** a private subnet | |||

** many subnet per AZ | |||

* it is possible to use a VPN to connect to a VPC | |||

[[File:VPC.jpg|500px]] | |||

===VPC FlowLogs=== | |||

Allow you to monitor trafic within in and out of your VPC | |||

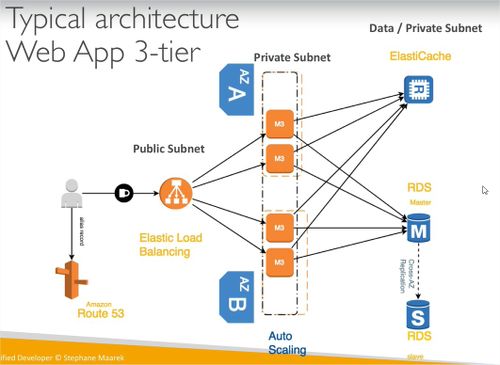

=3-Tier Architecture= | |||

[[File:3TierArchitecture.jpg|500px]] | |||

Latest revision as of 04:55, 21 October 2020

More

Cloud Trail

AWS CloudTrail is a service that enables governance, compliance, operational auditing, and risk auditing of your AWS account. With CloudTrail, you can log, continuously monitor, and retain account activity related to actions across your AWS infrastructure.

CloudTrail provides an event history of your AWS account activity, including actions taken through the AWS Management Console, AWS SDKs, command-line tools, and other AWS services.

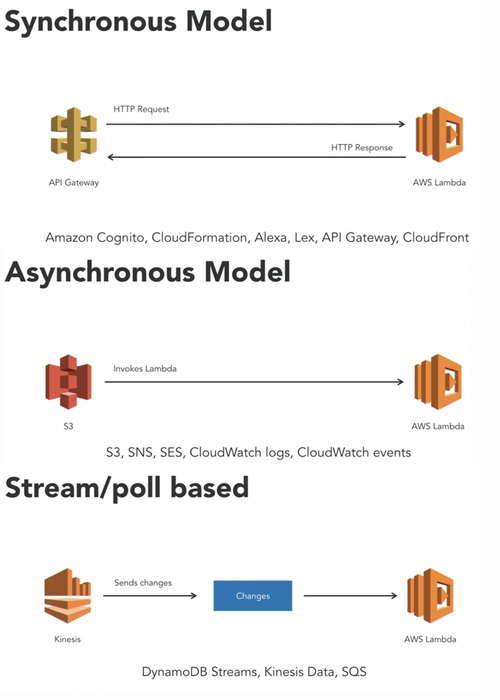

API Gateway

Amazon API Gateway is a fully managed service that makes it easy for developers to publish, maintain, monitor, and secure APIs at any scale. Using API Gateway, you can create an API that acts as a “front door” for applications to access data, business logic, or functionality from your back-end services, such as EC2 or Lambda functions. https://aws.amazon.com/api-gateway/

More informations

- Exam Guide: File:Aws exam guide.pdf

- Course: https://www.udemy.com/course/aws-certified-developer-associate-dva-c01

- More informations about this AWs Certified Developer Associate Certification: https://aws.amazon.com/fr/certification/certified-developer-associate/

Amazon Web Services

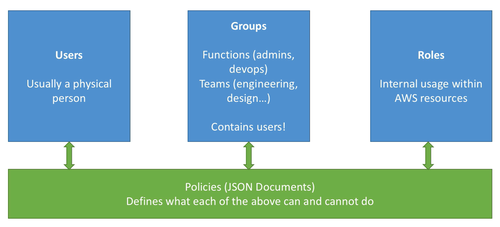

IAM Federation

- List Privileges Principle

- is not region-dependant

- Roles are a collection of policies that services are assigned in

- To groups can be assigned policies as well

Never write credentials into the code: someone will min bitcoint with it in few seconds and we gonna have 22000$ bill in one day

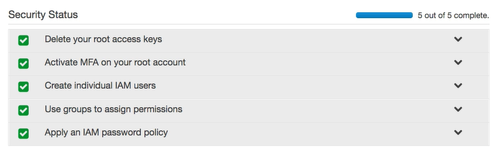

IAM Password Policy

Guarentee that IAM users, will create strong password and all theses passwords will change often

Security

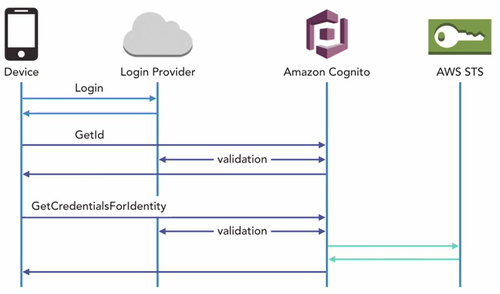

User pools

Directories that provide signup and sign-in options for you app users. Associates their logins with their profiles

Anonymous Access

Anonymous Access creates Identity pools for you.

Identity Pools with Cognito

Identity pools provide AWS credentials to grant your users access to other AWS services.

Roles

Access Policies to a non user identity Roles are collections of policies to which services are assigned.

Key point

- Always prefer the use of roles over users and access key pais.

IAM: Identity and Access Management

In IAM, which areas need to be considered with restrictions and access? Computing, Storage, Database, and App services

IAM Groups

Helps you avoiding having to repeat yourself when assigning policies to users individually

Policies

- Policies specify specific permissions

- written in JSON

Development

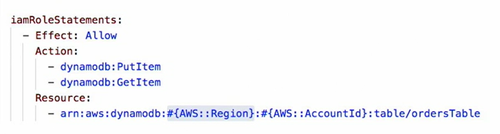

DynamoDb

Schema-Less database that only requires a table name and primary key. Can you use Oracle RDBMS with DynamoDB? No; DynamoDB is for non-relational databases, and Oracle is a relational database.

Lambda

AWS Lambda is a computing service that lets you run code without provisioning or managing servers.

Messaging and Event Driven

Message => step functions => event/lambda => SNS => SQS => Lambda

States Machines

State machines are made up of states, their relationships, and the input and output defined by the Amazon States Language.

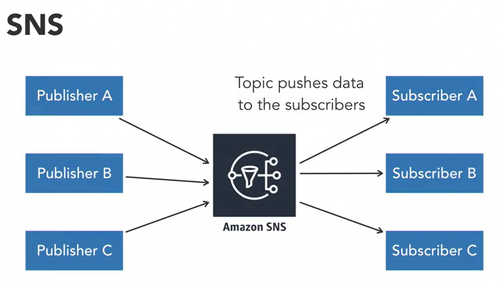

SNS

SNS pushes its messages out to its subscribers.

SQS

SQS stores the messages until someone reads them and processes them off the queue. SQS is useful for sending and receiving messages between apps.

Deployment, Scalability and Monitoring

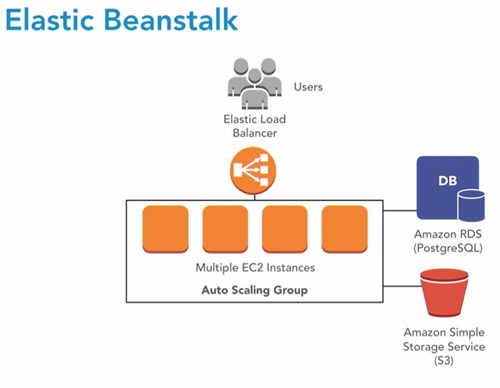

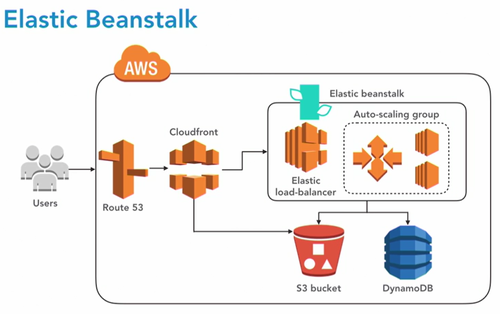

Elastic Bean Stalk

Deploy and scale web apps and services

CloudFormation Stacks

Configure and Maintain system

CloudFormation

Provisions and management stacks of aws resources based on the template you created to model your infrastructure

Elasticache

Service to launch and scale and manage a distributed in memory cache Caching is great to performance and efficiency

Cluster

- Redis

- Memcache

CloudFront

Global Content Delivery Network CloudFront is secure and quickly delivers data, video, applications, and APIs. This means it has shorter distances to deliver and higher performance.

Cloud Watch

You can create high-resolution alarms and automated actions. These alarms can monitor costs for your budget, metrics, events, and logs. CloudWatch alarms are part of Elastic Beanstalk, and there are two of them. What are the two alarms? CloudWatch has two alarms to monitor loads, and they trigger when the alarms are too high or too low for the auto scaling group.

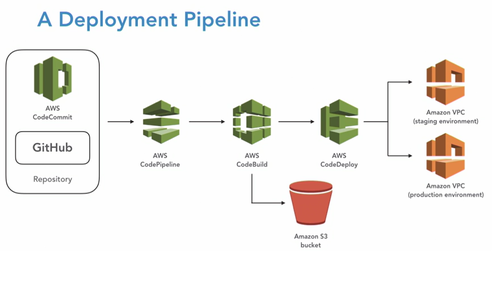

AWS: Deploying Your Application to the cloud

Code Commit

Git compatible, secure and scalable source control. Can be trigger by AWS Tools It is recommanded to use IAM user. IAM has a specific section for CodeCommit credentials. Recommanded: Use MasterBranch as a trigger for deployments

- Access: CodeCommitFullAccess

How can you move files from one source control management tool to CodeCommit ? Create an empty repo. Clone your empty codecommit repo. Manually copy and commit the files into it. Then push them to the repo.

Commands

<source lang="shell"> aws Configure git --version git config --global username </source>

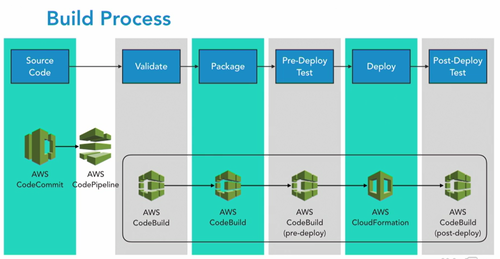

Code Build

Archive or War file

buildspec.yml

- phases : install : commands

- phases : install : finally

Have to install Java sdk & maven We can have more than one BuildSpec file

- How can you pass values to control my codebuild scripts? Use Environment Variables.

- Where can you find your build logs?

- CloudWatch logs

- CodeBuild console

Create Build Project

- Choose manage image

- We are allow to create a role

- Timeout is very important

- Specify a VPC if we need to access to other servers or services during buiild, but it isn't very common

- Choose s3 and a bucket to store the artifacts

- Standard format is zip

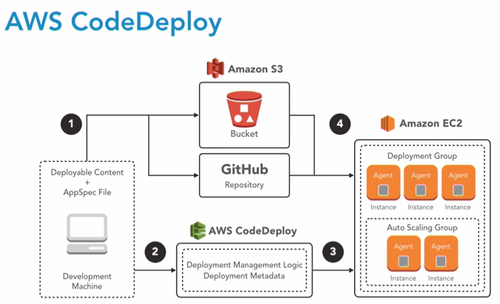

Code Deploy

- appspec.yml : tells codedeploy what to do with our generated binaries and any additional files we may need

- Genrally: takes the web archive and copy it to the webapps folder of a tomcat web server where it will be deployed automatically by apache tomcat

- Files: source / destination

Deployment

Deployement groups

a way to identify the servers we are deploying to

- Name

- Role of EC2 to code deploy

- Choose to uncheck the Load Balancer

- triggers, alarms, rollbacks

Create deploymenet

Now we have an Application and a Deployment group we can create a deployment

- Wich files to deploy

- where to deploy them

s3 URL

s3://bucketname/file

- Ruby is required by our code deployment agent

- Download an install file:

<source lang="shell"> wget https://aws-codedeploy-us-west-1.s3.amazonaws.com/latest/install chmod +x ./install sudo .install auto sudo service codedeploy-agent status </source> Important to check: EC2 Console > right clic server > settins > IAM Role > S3Admin

- Code deploy through the code-agent will reach out to s3 to our bucket with the deployment binary

KeyPoints

What's needed to create a revision .ZIP file? An AppSpec and the code binaries

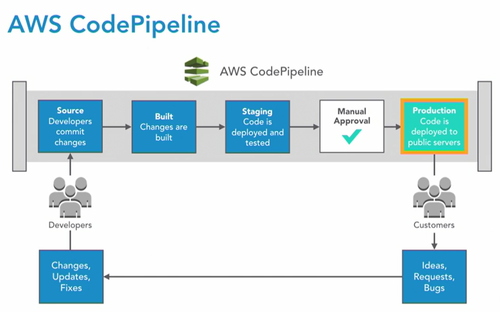

Code Pipeline

Source > Build binary > Test > Production

Create a pipeline

- Create a role: this role will allow code pipeline to access this particular pipeline wich will be created in our pipeline

- even AWS itself not have access to resources within our account unless we provide privileges

- Specify a branch

- CHoose detection option : code pipeline vs cloud watch

Configure the build stage

- Choose a build provider: codeBuild

- Configure: Operating system, runtime, runtime version = can be upgraded in buildspec. yml file

Deploy Stage

- Select Application and Deployment group\

Go

As soon as the pipeline is created, it is running so make sure it is what you want

Key Points

- Continuous integration and deployment pipeline

- various tools

- Passe the output to the next stage

- Jenkins like: difference it is a service

- simple to complex workflows

- Support approvals

When is it a good practice to add a CodePipeline approval step? during deployment to servers that are being used regularly Disrupting QA Testers by taking down their server unannounced is a bad practice. You should consider an approval for this, just like production deployment approvals.

- It is possible to generate more than one artifact on one build

EBS: Elastic BeanStalk

What is beanstalk doing ?

- HighAvailability

- Backups

- Health Checks

- Alarms

- Track Metrics

- Code deploy agents

- database: constant patching, updates, backups, monitoring

Create

what will we do

- Create a server

- give it a domain name

- install all the required softwares

- handle the deployment

- handle execution

Environment

In beanstalk, an application is a container for environments the environment is the application architecture it is made up of

- application servers

- web servers

- load balancers

- auto scalling group

- database

- ....

By default: Web Base Application the "ugly" domain name can be modified with a C-Name but if we want to change it, the environment has to be deleted.

Finally: It will trigger a cloud formation build

Use it with code pipeline

- send directly the compiles java class to beanstalk

- create a secondary buildspec.yml files that is going to create an artefact

HOW TO : Pipeline > edit > Edit Stage > Add Action > Create Project > Configure > Specify the name of the new buildspec.yml file > Give a name to the multiple Output artefacts

KeyPoints

- deploys and manage web or background worker apps

- Helps with updates, health checks and alarm

- deployment strategies such as CodeDeploy

- there is a CLI

- Elastic Block Storefor RDS

- YML or JSON config files .ebextensions

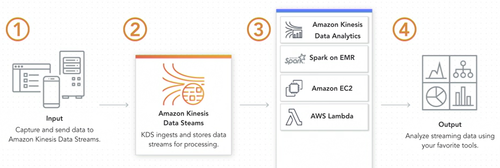

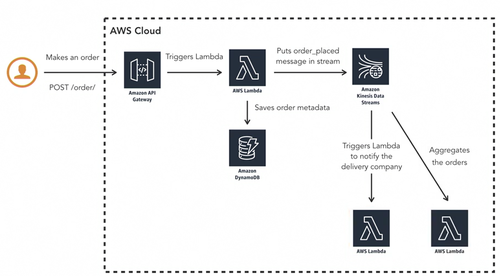

Data-Driven Serverless Applications with Kinesis

How to create a production ready application that can scale, be cost efficient,and support real-time input of data. Serverless architecture in the cloud and infrastructure as code:

- very scalable backend

- pay what we use

- easy to maintain and extend

Serverless

- pay for what you use

- no need to manage infranstructure

- the system automatically scales

BaaS

DynamoDB, Algolia, Auth0

FaaS

Born in 2014 with AWS Lambda. Every function is in a virtual container run and killed by every call of the function. The functions are triggered by events in AWS like HTTP Request, database change, new file, Message in queue ...

AWS Lambda

Pricing

- pay for what you use

- charged based on the number of requests

- charged on the duration of execution

More

- function(

- Event Object: Data sent during invocation

- Context Object: Methods available to interact with the runtime information

- Callback: return information to the invoker

- );

Framework 101

- infrastructure as code: define your serverless application in a configuration file

- provider agnostic: manage multiple clouds with one tools

- extensible with plugins: Open Source & Big community

- Multilingual : Java, TypeScript, Python ...

- Deploy functions and ressources without needed to go to the console, just with commands

- Application lifecycle management: build in support for local developlment stations

- Streaming logs

how to

<source lang="shell"> npm install -g serverless serverless config credentials --provider aws --key ****** --secret **** </source> Go to Users > User > Security credentials > Create an access key

Infrastructure as code

Improve the quality of the infrastructure by

- Test it and review it

- high level programming

- can be replicated

The script is in YML

CloudFormation

Provides a common language to describle and provision all the infrastructure resources in a cloud environment.

- Template: JSON or YML

- Automated

- secure

- all the resources needed for the application ...

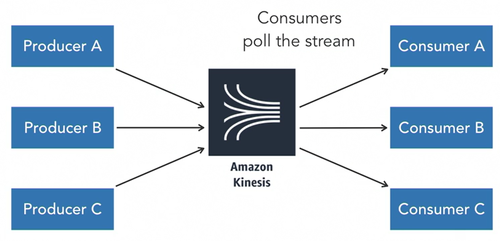

Kinesis

Collect, process and analyse real-time streaming data

Capabalities

- Data Streams

- Video Streams

- Data Firehose

- Data Analytics

Data Streams

Enables real-time processing of streaming big data if expected to have a lot of orders and it is needed to be processed in real time

- Shard: base throughput unit of the stream

- append-only log

- ordered sequence of records

- ingest 1 MB/s

- Number of shards needed when creating the stream

- Data Stream: Logical grouping of shards

- Data retention between 24 hours and 7 days

- Partition Key: meaningful identifier

- Used to route data records of the different shards

- Sequence Number: unique identifier for each data record

- Data record: Unit of data stored

- Composed of sequence number, partition key and data blog

- Data blob: data your producer adds to the stream, max 1Mb

- Data Producer: application that emits data records to the stream

- Assigns partition keys to records

- Data consumer: Kinesis application or AWS service that gets the data from the stream

| Databases | Stream |

| Data is static | Data is dynamic |

| Keep the system decoupled |

SQS

Amazon Simple QUeue Service is:

- Fully Managed queuing service

- decouple and scale serverless applications

Receiver have to pull the message. it is not pushed in opposition with SNS.

Limitations

- Single Consumer

- No replay ability

SNS: Amazon Simple Notification Service

- Fully Managed Pub/sub messaging service

- decoupled serverless applications

SQS vs SNS vs Kinesis Streams

| Kinesis Streams | SQS | SNS |

| Real time processing of streaming big data | Fully managed queue; single consumer | Fully managed pub/sub; messaged are pushed to the consumers immediatly |

S3: Simple Storage Service

Contains S3 Buckets By default buckets are closed. We need to add a policy to open it

- Defined to region level

- Data Storage

- Media Distribution

- Backup destination

- Bucket names are unique through AWS

Objects

- Store Objects : by key = Entire Full Path

- There is no concept as directories, only very very long key names

- Object values = file content max = 5TB

If it is need to upload a file of more 5GB, must use "multi-part upload"

- Best PracticeVersion id if versioning is enabled

Creation

- DNS Compliant Name

- Upload file

- Properties: OR

- Static WebSite Hosting

- Versioning

- ...

Encryption

Important to know in the exam: wich method of encryption is for what situation

SSE-S3

- Encrypts s3 objects using keys handled & managed by AWS

- Encrypted server side

- AWS-256 type

- Must set header "x-amz-server-side-encryption":"AWS256"

SSE-KMS

- Encrypts s3 objects using keys handled & managed by KMS

- Encrypted server side

- Leverage AWS Key management service to manage encryption key

- + audit fail

- Must set header "x-amz-server-side-encryption":"aws:kms"

SSE-C

- When you want to manage your own encryption keys outside of AWS

- Encrypted server side

- HTTPS must be used

- Encryption key must be provided in HTTP Headers for every HTTP request made

Client Side Encryption

- CLient library such as Amazon S3 Encryption Client

- Clients must encrypt data themselves before sending to S3

- Clients must decrypt data thenseleves when retrieving from s3

- Customer fully manages the keys and encryption cycle

Encryption in transil SSL

AWS Exposes:

- HTTP Endpoint: non encrypted

- HTTPS Endpoint: encryption in flight

Encryption Flight is also called SSL/TLS in the exam !

Versioning

- Can specify max duration for files versions

Important files

- Use bucket policies to restriuct access into buckets and s3

- uses SSL for in-transit data encryption

- MFA: Multi-Factor-Authentification activation is important

Presigned URL

Share only one file without compromising security of the whole S3 bucket

- Expiring URLs base on time period

- Share one file and not all the bucket

- AWS CLI

KMS: Key Management Service

- fully managed encryption key management service

- highly available, secure and compliant

- can import key

- Seamless integrates with several AWS Service

- KSM keys are regional and cannot be exported

- Key delete has to be scheduled

- key rotation is a good practice and can be automatied

- All keys have a policy to define who can use the key

Cognito

- Social Identity

- User pools

- Enterprise identity

- Data synchornization

- Manage access to your APIs and AWS resources

SAML: Security Assertion Markup Language

Open standard for exchanging authentication and authorization data between our identity providers and our service providers Sample of another SAML identity provider Active Directory

- Two factor authentication supported

- custom logins and registration

SES: Simple Email Service

- Verification Email

- High volume

- Great uptime

- Use any from in your domain

- Cheap

- Sending metrics and alarms with CloudWatch

- Can be use through wordpress

- SES Bounce notifications with SNS

DKIM: Domain Keys Identified Mail

Provide proof that your email is authentic with DKIM Signature stored in DNS System

- System is provided with Private and Public key

- > nslookup domain.com

SMTP: Simple Mail Transfert Protocol

- Server name

- Port number

- Encryption

- Username & Password

- SMTP Error: 250 = mail action ok

- SMTP Error: 550 = mailbox unavailable

Security

EICAR: European Institute for Computer Antivirus Research

- There is a sample file looks like an antivirus just to test the email service

Lambda Functions to handle SES Bounces

- Configure permissions

- Make sur Enable Trigger box is checked

MySQL

- Connect the lambda function to any MySQL Database Server

EC2: Elastic Compute Cloud

- RAM

- CPU

- I/O: Disk performance, EBS Optimisations

- NETWORK

- GPU

Comes by default with

- Private IP for Internal AWS Network

- a Public IP for WWW ==> it can change !

SSH

- use only the public IP

- Permissions 0644 ==> Premission denied / Bad permissions

- It is required that your private file jey files are NOT accessible by others. This private key will be ignored.

- Because the private key can leak, it is created by default with Least Privileges Principle

- change the permission to chmod0400 private.key

AMI: Amazon Machine Image

An AMI created for a region can only be seen in that region An Amazon Machine Image (AMI) provides the information required to launch an instance. You must specify an AMI when you launch an instance. You can launch multiple instances from a single AMI when you need multiple instances with the same configuration. You can use different AMIs to launch instances when you need instances with different configurations.

An AMI includes the following:

- One or more EBS snapshots, or, for instance-store-backed AMIs, a template for the root volume of the instance (for example, an operating system, an application server, and applications).

- Launch permissions that control which AWS accounts can use the AMI to launch instances.

- A block device mapping that specifies the volumes to attach to the instance when it's launched.

It can go with

- Amazon Linux

- Ubuntu

- Windows

- ...

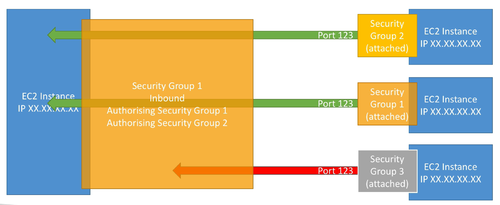

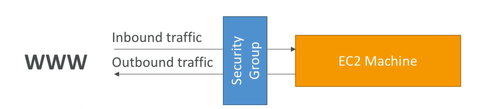

Security Group

- One separate security group for SSH access

- can be attached to multiple instances

- Locked down to a region/VPC combination

- Application timeout = security group issue

- Connection Refused = Application error

- does live OUTSIDE the EC2

- 0.0.0.0/0 means any IP

- ::/0 : means IPv6

- All Inbound trafic is blocked by default

- Outbound: By default all trafic is allowed by default

Can reference all of the following:

Can reference all of the following:

- Ip Adress

- CIDR Block

- Security Group

But not

- DNS Name

Elastic IPs

- Public IPv4

- Rapidlyu remapping the adress to another account

- by default, 5 elastic IPs on AWS

- Try to avoid using it ==> Poor Architectural decisions ==> Use random IP + DNS (route 53)

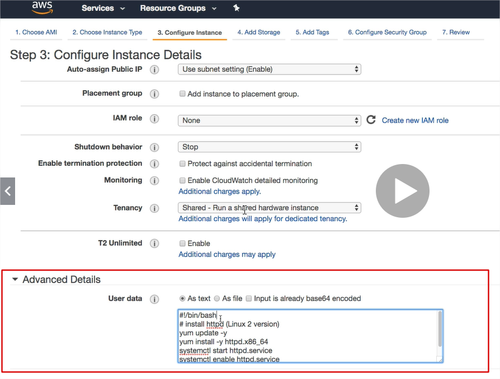

EC2 User Data

Bootstrapping means lauching commands when a machine starts

- it is possible to bootstrap (launching commands/script ONCE when a first machine start) our instances using an EC2 User data script

automate boot tasks such as:

- installing updates

- installing software

- downloading common files from the internet

- anything you can think of

User Data is automatically run with the SUDO command

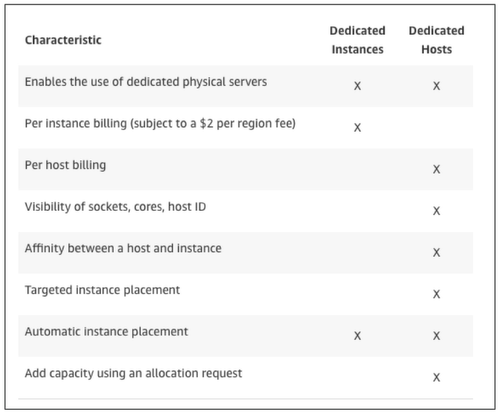

EC2 Instance Launch Types

- On Demand Instances: Short workloads, predictable pricing

- Recommanded for auto scaling

- Reserved Instances: long workloads >= 1 year

- Convertible Reserved Instances: long workloads with flexible instances

- Can change the EC2 instance type - up to 54% discount

- Scheduled Reserved Instances: launch within time window you reserve

- launch within time window you reserve

- Convertible Reserved Instances: long workloads with flexible instances

- Spot instances: short workloads, for cheap, can lose instances

- Dedicated Instances: no other customers will share your hardware

- Batch jobs, Big Data Analysis, Workloads that are resilient to failure

- not for critical jobs or databases

- Dedicated Hosts: book an entire physical server, control instance placement

- software wioth complicated licensing model (BYOL)

Install Apache

<source lang="shell"> sudo su yum update -y #Default Yes yum install -y httpd.x86_64 systemctl start httdp.service systemctl enable httpd.service curl localhost:80 #load whatever is an URL </source>

T2 Unlimited

is a new concept from nov 2017. It is possible to have an "Unlimited Burst Credit Balance"

GoodTo Know

- SSH on EC2: change .pem permissions

- Security Groups: opening ports, IP security ...

- Difference Private / Public / Elastic IP

- User Data allows you to customize instance at boot time

- can build AMI to enhance your OS

- EC2 billed by the second - with a minimum of 60

ELB: Elastic Load Balancer

From 2017, new generation v2 load balancers are the one recommanded to use by Amazon

- Single Point of Access / DNS

- Spread load across multiple downstream instrances

- Seamlessly handle failures

- Do regular Health check

- provide SSL

- Enfore Stickiness with cookies

- High Availability across zones

- Separate Public from Private trafic

- can be internal or external

Stickiness

Allow users to stay connected between the differents calls over HTTP Load balancing them on different EC2 instances

CLB: Classic Load Balancer

Deprecated

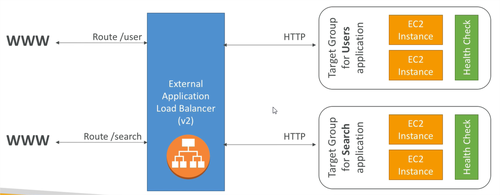

ALB: Application Load Balancer V2

for: HTTPS/HTTPS & Web Sockets protocol Great for MicroServices and Container-Based Application is load balancing to:

- multiple http app across machines

- multiple application across the same machine

- based on route in URL

- based on hostname in URL

- has a port mapping feature to redirect to a dynamic port

- Stickiness can be enabled at the target group level

- The application server don't see the ip of the client

- the true IP of the client is inserted in the headed X-Forwarded-For\

Network Load Balancer

for TCP

Good To Know

- All the Load Balancers has a static host name DO not resolve and use underlying IP

- LBs Can scale but not instantaneously

- NLB directly see the client IP

- 4xx are client induced errors

- 5xx are application induced errors

- 503 error means at capacity or not registered target

- if the LB can't connect to the application, check the security group

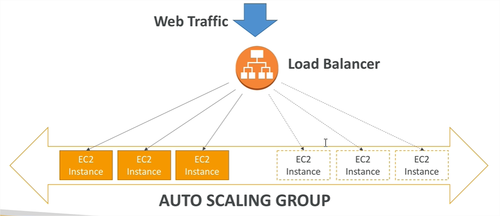

Auto Scaling Group

They are Free. The ASG can restart instances. Attributes:

- Launch Configuration

- AMI + Instance Type

- EC2 User Data

- EBS Volumes

- Security Groups

- SSH Key Pair

- Min/Max size - Initial Capacity

- Network + Subnets informations

- Load Balancer Informations

Cloud Watch Alarms

ASG can be based on alarms.

New Rules

easier to set up

- Target Average CPU Usage

- Number of requests on the ELB per instance

- Average Network In / Out

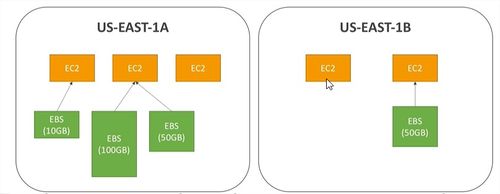

EBS Volumes: Elastic Block Store

A network drive giving a way to store instance data somewhere

Can be detached and attached from an EC2 instance to another very quickly

It is locked to AZ: Availability Zones

They have a provisioned capacity

4 types of volume

- GP2 - SSD - balance price / performance

- IOI - SSD - low latency

- STI - HDD - frequently accessed

- SCI - HDD - less frequently accessed

EBS Encryption

Encryption and decription are handled transparently and a minimal impact on latency

- Data at rest is encrypted inside the volume

- encrypted in-flight between the instance and the volume - nothing to do

- snapshots are encrypted

- volumes from snapshot are encrypted

EBS vs Instance store

- better I/O performance

- On termination it is lost

- Cannot be resized

- Backups must be operated by the user

Route 53

- Public / privates domains

- Load balancing through DNS

- Health CHecks

- Routing policy

- CNAME = performance reasons

Domain

Hosted Zones

By default recordset:

- NS

- SOA

Create:

- A record to Load Balancer

- CNAME for subdomains

RDS: Relational Database Service

Postegres, Oracle, Mysql, MariaDB, Microsoft SQL Server, Aurora

- ASYNC

- Cross AZ or Cross Region

- Up to 5

- Usually deployed within a private Subnet

- RDS Security works by leveraging security groups like EC2 Instances

- Iam = who can MANAGE RDS

- Username / Password can be used or IAM user

- Default port: 3306

- DB Instance identifier is unique accross regions

On EC2

- Continuous Backups

- OS Patching Level

- Replicas

- Multi AZ for DR: Disaster Recovery

- Maintenance Windows

- Scaling Capability

Multi AZ

Used For disaster Recovery

Backups

- Automatically Enabled

Automated Backups

- Daily Snapshot

- Capture transaction logs in Real Time

- Respore point to ANY TUME

- 7 DAYS RETENTION

Snapshots

- Manually Triggered

- Retention of backup for ALAYW

Encryption

Difference between enforcing SSL and Connecting SSL: Connecting using SSL means SSL is necessarly enforced.

- KMS - AWS-256

- SSL in flight.

- To Enforce SSL:

- PostgreSQL:

<source lang="shell">rds.force_ssl=1</source>

- MySQL:

<source lang="MySQL">GRANT USAGE ON *.* TO 'mysqluser'@'%'REQUIRE SSL;</source>

Aurora

A proprietary technology from AWS - not Open Source

- Compatible with Postegres and MySQL drivers

- Cloud optimized: 5 times performance on MySQL or 3 times performance on Postegres

- Store grows by 10gb up to 64 TB

- can have 15 replicas

- faster for replication - sub 10ms replica lag

- High Avaibility Native, failover is instantaneous

- costs 20% more than RDS

- is not free-tier compatible

AWS ElastiCache

- To get managed Redis or Memcached

- Caches are in-memory databases with really high performance, low latency

- Read intensive workloads

- Helps make your application stateless

- Write scaling using sharding

- Multi AZ with failover

- AWS takes care of: Maintenance, patching, optimizations, setup, configuration, monitoring, failure recovery, backups

ElastiCache Patterns

Lazy Loading

Write Through

Architecture Patterns

DB Cache

User Session Store

- Number 1: releaf load off database

- Number 2: To share some states: user session store into a common ground such as all the applications can be stateless and retrieve and write theses sessions in real time

Redis

- Memory Key-Value Store

- Super low latency

- Persistence: can survive reboots by default

- For: user sessions, leaderboard for gaming ==> there is a sorting capability, distributed states, reliev pressure of RDS Databases, pub/sub for messaging

- Mutli-AZ with auto failover for disaster recovery

- supports for read replicas

- Cluster mode enabled => more persistence and more orbustness

MemCached

- In-Memory Object Store

- doesn't survive reboots

- QUick retrieval of objects from memory

- Cache often access objects

- Redis is largely popular

AWS VPC: Virtual Private Cloud

- VPC are Per Account Per Region

- Each VPC Contains Subnets

- It is common to have:

- a public subnet

- a private subnet

- many subnet per AZ

- it is possible to use a VPN to connect to a VPC

VPC FlowLogs

Allow you to monitor trafic within in and out of your VPC