AWS Certified Developer Associate: Difference between revisions

| Line 199: | Line 199: | ||

* easy to maintain and extend | * easy to maintain and extend | ||

==Serverless== | ==Serverless== | ||

* pay for what you use | |||

* no need to manage infranstructure | |||

* the system automatically scales | |||

===BaaS=== | |||

DynamoDB, Algolia, Auth0 | |||

===FaaS=== | |||

Born in 2014 with AWS Lambda. | |||

Every function is in a virtual container run and killed by every call of the function. | |||

The functions are triggered by events in AWS like HTTP Request, database change, new file, Message in queue ... | |||

==Kinesis== | ==Kinesis== | ||

==SQS== | ==SQS== | ||

==SNS== | ==SNS== | ||

==AWS Lambda== | ==AWS Lambda== | ||

Can be Synchronous, Asynchronous, Stream/poll-based | |||

* function( | |||

** Event Object: Data sent during invocation | |||

** Context Object: Methods available to interact with the runtime information | |||

** Callback: return information to the invoker | |||

*); | |||

Revision as of 23:58, 25 February 2020

Anazon Web Services

IAM

- Policies specify specific permissions

- Roles are a collection of policies that services are assigned in

- To groups can be assigned policies as well

Security

User pools

Directories that provide signup and sign-in options for you app users.

Anonymous Access

Anonymous Access creates Identity pools for you.

Identity Pools with Cognito

Identity pools provide AWS credentials to grant your users access to other AWS services.

Roles

Roles are collections of policies to which services are assigned.

IAM

In IAM, which areas need to be considered with restrictions and access? Computing, Storage, Database, and App services

Development

DynamoDb

Schema-Less database that only requires a table name and primary key

Lambda

AWS Lambda is a computing service that lets you run code without provisioning or managing servers.

DynamoDB

Can you use Oracle RDBMS with DynamoDB? No; DynamoDB is for non-relational databases, and Oracle is a relational database.

Messaging and Event Driven

Message => step functions => event/lambda => SNS => SQS => Lambda

States Machines

State machines are made up of states, their relationships, and the input and output defined by the Amazon States Language.

SNS

SNS pushes its messages out to its subscribers.

SQS

SQS stores the messages until someone reads them and processes them off the queue. SQS is useful for sending and receiving messages between apps.

Deployment, Scalability and Monitoring

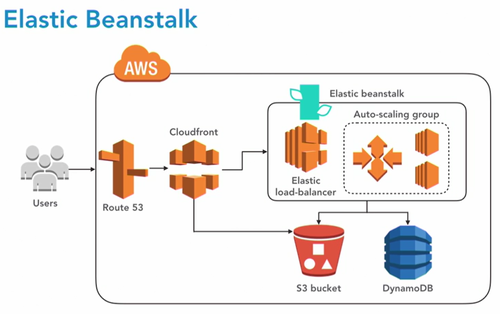

Elastic Bean Stalk

Deploy and scale web apps and services

CloudFormation Stacks

Configure and Maintain system

CloudFormation

Provisions and management stacks of aws resources based on the template you created to model your infrastructure

Elasticache

Service to launch and scale and manage a distributed in memory cache Caching is great to performance and efficiency

Cluster

- Redis

- Memcache

CloudFront

Global Content Delivery Network CloudFront is secure and quickly delivers data, video, applications, and APIs. This means it has shorter distances to deliver and higher performance.

Cloud Watch

You can create high-resolution alarms and automated actions. These alarms can monitor costs for your budget, metrics, events, and logs. CloudWatch alarms are part of Elastic Beanstalk, and there are two of them. What are the two alarms? CloudWatch has two alarms to monitor loads, and they trigger when the alarms are too high or too low for the auto scaling group.

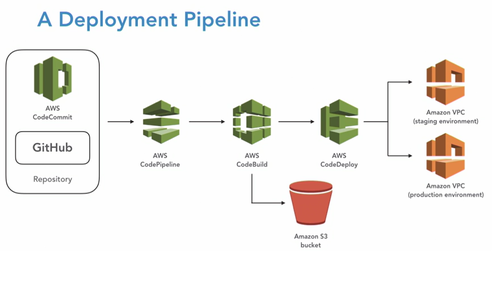

AWS: Deploying Your Application to the cloud

Code Commit

Git compatible, secure and scalable source control. Can be trigger by AWS Tools It is recommanded to use IAM user. IAM has a specific section for CodeCommit credentials. Recommanded: Use MasterBranch as a trigger for deployments

- Access: CodeCommitFullAccess

How can you move files from one source control management tool to CodeCommit ? Create an empty repo. Clone your empty codecommit repo. Manually copy and commit the files into it. Then push them to the repo.

Commands

- > aws Configure

- > git --version

- > git config --global username

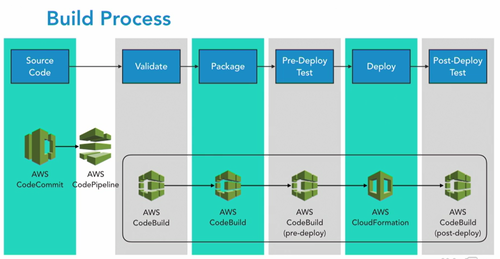

Code Build

buildspec.yml

- phases : install : commands

- phases : install : finally

Have to install Java sdk & maven We can have more than one BuildSpec file

- How can you pass values to control my codebuild scripts? Use Environment Variables.

- Where can you find your build logs?

- CloudWatch logs

- CodeBuild console

Create Build Project

- Choose manage image

- We are allow to create a role

- Timeout is very important

- Specify a VPC if we need to access to other servers or services during buiild, but it isn't very common

- Choose s3 and a bucket to store the artifacts

- Standard format is zip

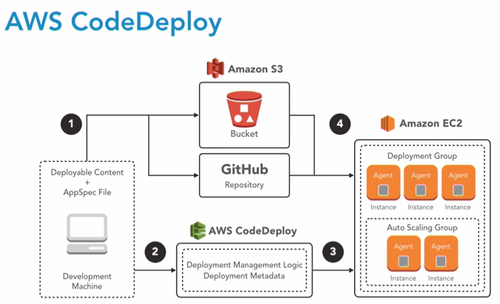

Code Deploy

- appspec.yml : tells codedeploy what to do with our generated binaries and any additional files we may need

- Genrally: takes the web archive and copy it to the webapps folder of a tomcat web server where it will be deployed automatically by apache tomcat

- Files: source / destination

Deployment

Deployement groups

a way to identify the servers we are deploying to

- Name

- Role of EC2 to code deploy

- Choose to uncheck the Load Balencer

- triggers, alarms, rollbacks

Create deploymenet

Now we have an Application and a Deployment group we can create a deployment

- Wich files to deploy

- where to deploy them

s3 URL

s3://bucketname/file

- Ruby is required by our code deployment agent

- Download an install file:

- > wget https://aws-codedeploy-us-west-1.s3.amazonaws.com/latest/install

- > chmod +x ./install

- > sudo .install auto

- > sudo service codedeploy-agent status

Important to check: EC2 Console > right clic server > settins > IAM Role > S3Admin

- Code deploy through the code-agent will reach out to s3 to our bucket with the deployment binary

KeyPoints

What's needed to create a revision .ZIP file? An AppSpec and the code binaries

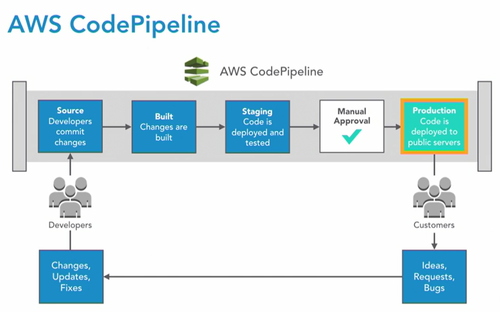

Code Pipeline

Source > Build binary > Test > Production

Source > Build binary > Test > Production

Create a pipeline

- Create a role: this role will allow code pipeline to access this particular pipeline wich will be created in our pipeline

- even AWS itself not have access to resources within our account unless we provide privileges

- Specify a branch

- CHoose detection option : code pipeline vs cloud watch

Configure the build stage

- Choose a build provider: codeBuild

- Configure: Operating system, runtime, runtime version = can be upgraded in buildspec. yml file

Deploy Stage

- Select Application and Deployment group\

Go

As soon as the pipeline is created, it is running so make sure it is what you want

Key Points

- Continuous integration and deployment pipeline

- various tools

- Passe the output to the next stage

- Jenkins like: difference it is a service

- simple to complex workflows

- Support approvals

When is it a good practice to add a CodePipeline approval step? during deployment to servers that are being used regularly Disrupting QA Testers by taking down their server unannounced is a bad practice. You should consider an approval for this, just like production deployment approvals.

- It is possible to generate more than one artifact on one build

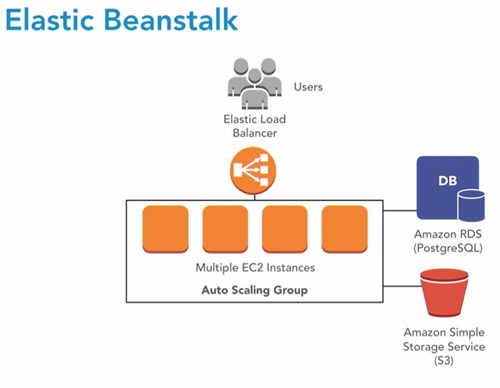

Elastic BeanStalk

- HighAvailability

- Backups

- Health Checks

- Alarms

- Track Metrics

- Code deploy agents

- database: constant patching, updates, backups, monitoring

Create

what will we do

- Create a server

- give it a domain name

- install all the required softwares

- handle the deployment

- handle execution

Environment

In beanstalk, an application is a container for environments the environment is the application architecture it is made up of

- application servers

- web servers

- load balencers

- auto scalling group

- database

- ....

By default: Web Base Application the "ugly" domain name can be modified with a C-Name but if we want to change it, the environment has to be deleted.

Finally: It will trigger a cloud formation build

Use it with code pipeline

- send directly the compiles java class to beanstalk

- create a secondary buildspec.yml files that is going to create an artefact

HOW TO : Pipeline > edit > Edit Stage > Add Action > Create Project > Configure > Specify the name of the new buildspec.yml file > Give a name to the multiple Output artefacts

KeyPoints

- deploys and manage web or background worker apps

- Helps with updates, health checks and alarm

- deployment strategies such as CodeDeploy

- there is a CLI

- Elastic Block Storefor RDS

- YML or JSON config files .ebextensions

Data-Driven Serverless Applications with Kinesis

How to create a production ready application that can scale, be cost efficient,and support real-time input of data. Serverless architecture in the cloud and infrastructure as code:

- very scalable backend

- pay what we use

- easy to maintain and extend

Serverless

- pay for what you use

- no need to manage infranstructure

- the system automatically scales

BaaS

DynamoDB, Algolia, Auth0

FaaS

Born in 2014 with AWS Lambda. Every function is in a virtual container run and killed by every call of the function. The functions are triggered by events in AWS like HTTP Request, database change, new file, Message in queue ...

Kinesis

SQS

SNS

AWS Lambda

Can be Synchronous, Asynchronous, Stream/poll-based

- function(

- Event Object: Data sent during invocation

- Context Object: Methods available to interact with the runtime information

- Callback: return information to the invoker

- );